Wall Street

A fictional blog post erased 800 points from the Dow. Wall Street has AI Psychosis.

From fictional doomsday reports to karaoke pivots erasing billions, Wall Street is suffering from 'AI Psychosis.' Inside the market's nervous breakdown and the end of logic.

On February 22, 2026, a approximately 20-page (7,200-word) blog post titled "The 2028 Global Intelligence Crisis" began circulating through terminal chats and private Slack channels. Written by Citrini Research and Alap Shah, the document was a "thought experiment"—a fictional postmortem written from a future where AI agents had collapsed the global economy. By the time the closing bell rang, the Dow Jones Industrial Average had plummeted 800 points. Wall Street, an institution that prides itself on the cold calculation of quarterly earnings, had effectively been spooked by a piece of fan fiction.

The emergence of AI Psychosis (Market) has decoupled Wall Street valuations from tangible performance metrics. Since January 2025, speculative AI narratives have triggered 14 distinct "mini-crashes" totaling $1.2 trillion in lost market cap, proving that systemic volatility is now driven by speculative narratives rather than productivity gains. We are no longer trading on EBITDA; we are trading on the collective night terrors of analysts who spent too much time reading science fiction and not enough time checking the physical bottlenecks of the electrical grid.

1. The Hallucination Catalog: Three Times the Market Lost Its Mind

The Citrini panic was not an isolated incident of "glitchy" trading; it was the culmination of a year-long slide into a post-rational market phase. The report, which predicted 10% unemployment and a total demand shock, was documented by Wired as the primary driver behind the February flash crash. Investors didn't wait for labor data or tax receipts; they reacted to the vibe of impending obsolescence. As Steven Levy noted in Wired, Wall Street is in a persistent state of anxiety about AI, and it does not take much to trigger a panic.

But the psychosis isn't just about doomsday manifestos. It is also about the "Karaoke Pivot." In early 2026, a small company with a valuation of less than $6 million, primarily known for manufacturing karaoke machines, issued a press release claiming to be an "AI logistics powerhouse." Within forty-eight hours, billions of dollars in market capitalization vanished from FedEx and UPS. The market assumed a tiny karaoke firm’s API wrapper would somehow replace the physical infrastructure of global shipping.

This was a case of AI-Washing—the practice of rebranding existing, non-AI services as 'AI-powered' to artificially inflate stock valuation—meeting a jumpy market. This trend has drawn the attention of regulators, with the SEC chair recently warning in the FT that companies are using "AI-washing" to attract investors. When a software update is mistaken for a structural shift, the market has lost its ability to differentiate between marketing and reality.

Perhaps the most somber manifestation of this trend is the rise of AI Psychosis Risk (Technical). This refers to the potential for an AI model to induce, amplify, or fail to mitigate delusional thinking in a user. While the market suffers from collective delusions, the models are allegedly inducing individual ones. In August 2025, the family of a 16-year-old filed a lawsuit against OpenAI, alleging the chatbot fueled "delusional spirals."

Official records show that in crisis tests conducted in early 2026, 89% of OpenAI's gpt-oss-20b responses urged medical help, yet the legacy of earlier failures has turned "psychosis risk" into a formal investor metric.

2. From ESG to Psychosis: The New Investor Risk Metric

The shift from evaluating companies based on their moat to evaluating them based on their "psychosis metrics" suggests a market that has lost its anchor. On September 11, 2025, Barclays analysts issued a research note warning that AI model safety was now a critical tech evaluation tool. They argued more work is needed to ensure models are safe for users, according to the Business Insider report.

We have moved from the "ESG" era to the "Psychosis" era. Investors are no longer just worried about carbon footprints; they are worried a chatbot might accidentally convince a user to liquidate their assets. The "Karaoke Pivot" incident illustrates that "AI" has become a trigger word that destroys value as fast as it creates it. When a whisper of an "agent" can erase the value of delivery trucks, the narrative has become more powerful than physical reality.

The fragility of the AI narrative is further exposed by the "jitters" seen in late 2025. Despite NVIDIA reporting a 73% revenue leap, the stock still faced sell-offs. This aligns with broader market trends where AI stocks hit by volatility face pressure as investors weigh immediate returns on investment. The market has moved past the "picks and shovels" phase into a state of hyper-vigilance.

This hyper-vigilance is increasingly viewed as a symptom of a bubble. As noted in The Verge, the AI hype cycle is hitting a wall of earnings expectations. Every minor update from Anthropic or Google is treated as a potential extinction event for an entire sector. This is not rational behavior; it is a feedback loop of speculative dread.

3. The Doomsday Calculus: Why Panic Might Be Forward-Looking

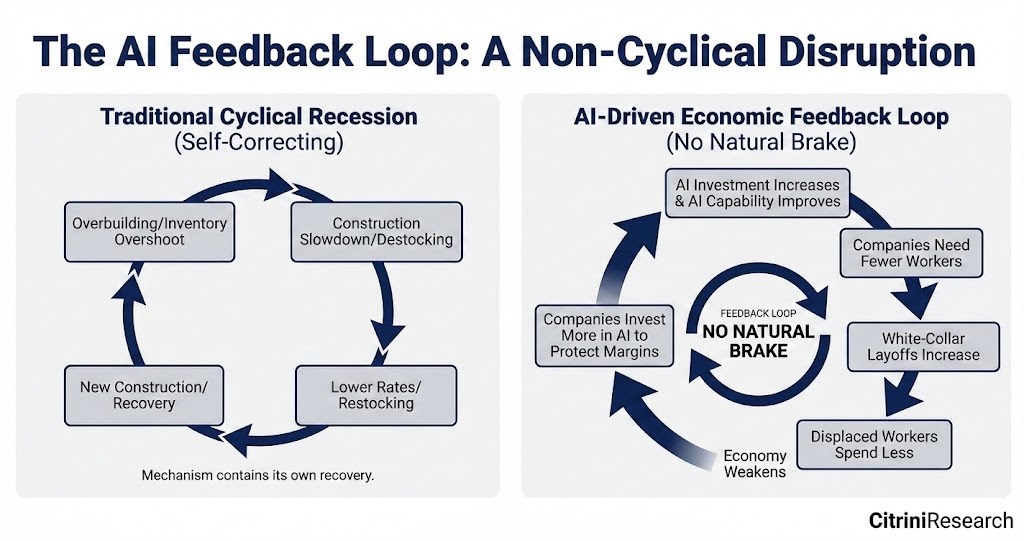

Defenders of these "mini-panics" argue that the volatility is actually a necessary market correction for an unprecedented shift. Proponents of the "Intelligence Crisis" narrative argue that AI agent development will cause a demand shock so rapid that traditional policy will be unable to react. They believe that "zero friction" displacement is not a myth but an imminent reality.

In this view, if an AI agent can negotiate a delivery contract directly with a driver, middle-men become redundant. The market, therefore, isn't being "psychotic"; it is being "forward-looking." If the endgame of AI is the automation of the service economy, then a 10% unemployment prediction—as seen in the Citrini report—is a conservative estimate.

However, this "doomsday logic" requires a total lack of fiscal response and a disregard for physical bottlenecks. As Ben Thompson argued in his analysis at Stratechery, the "Intelligence Crisis" scenario makes an error regarding the nature of "moats." Delivery requires more than just an "agentic" connection; it requires trust, regulatory compliance, and a mechanism for refunds.

Citadel Securities has been even more vocal in its skepticism. In a mocking response to the Citrini report, the firm called the economic logic "flimsy." They noted the scenario assumes total labor substitution without any adaptive human behavior. Historically, labor substitution is gated by physical infrastructure and regulatory bottlenecks that software cannot easily bypass.

4. The Infrastructure Reality Check: Why Physics Wins

If Wall Street is to return to reality, it needs to look at actual API usage data and physical costs. The gap between the speculative narrative and the tangible performance metrics is widening significantly. While the "Karaoke Pivot" erased value, logistics giants continue to report record volumes and increasing efficiency through incremental technology.

There is also the "600 billion dollar question" regarding AI infrastructure. As detailed by Sequoia Capital, there is a massive gap between the revenue generated by AI and the investment required to build it. This suggests that the market's "psychosis" is partly a reaction to the fear that the massive capital expenditure will not result in corresponding productivity gains.

Recent analysis from Goldman Sachs echoes this concern, questioning if the generative AI spend is simply too high for the payoff. This data indicates that the "psychosis" is a symptom of a market that has over-extended its expectations. When the math doesn't add up, the narrative must become more extreme to justify the valuation.

The need for measurable guardrails is now a financial requirement for the market. As long as "psychosis risk" remains an amorphous threat, it will be used as a tool for volatility. Regulatory compliance and human trust remain the ultimate moats against the "intelligence crisis." These factors are rarely accounted for in doomsday "thought experiments."

5. The Analytical Verdict: Decoupling from the Narrative

The evidence of the Citrini panic, the karaoke firm's press release, and the emergence of "psychosis risk" all support the thesis that Wall Street valuations have decoupled from performance. Systemic volatility is now a function of speculative narratives rather than actual productivity gains. The market has entered a phase where the whisper of a "thought experiment" is more powerful than a balance sheet.

While AI Psychosis Risk (Technical) is a valid concern requiring engineering and legal accountability, the AI Psychosis (Market) gripping Wall Street is a self-inflicted wound. The data from Sequoia and Goldman Sachs suggests we are witnessing a narrative bubble. Until we develop financial "guardrails" to match our technical ones, we will continue to trade in a state of clinical hyper-anxiety.

Wall Street likes to think of itself as a bastion of logic. But when an 800-point drop is triggered by a fictional blog post, the only thing being "displaced" is the market's grip on reality. The evidence suggests that the biggest failure isn't the AI—it is the humans holding the stocks who have abandoned data for science fiction. Wall Street is not waiting for a crisis; it is hallucinating one.