deepfakes

A finance worker spent 90 minutes in a deepfake video conference. It cost HK$200 million.

A forensic analysis of the $25M deepfake heist and the systemic collapse of video verification in an age where GAN/RVC pipelines have rendered trust obsolete.

The heist didn’t involve a ladder, a glass cutter, or a getaway driver idling in a subterranean garage. It involved a calendar invite. In February 2024, a junior finance employee at a multinational firm in Hong Kong joined a video conference call that appeared to be populated by the company’s Chief Financial Officer and several other colleagues. For 90 minutes, the employee took direction from the digital avatars of his superiors, ultimately executing 15 transfers across five different bank accounts. By the time the call ended, HK$200 million (approximately $25.6 million USD) had vanished into the ether. This was not a failure of individual judgment, but a systemic collapse of the medium itself.

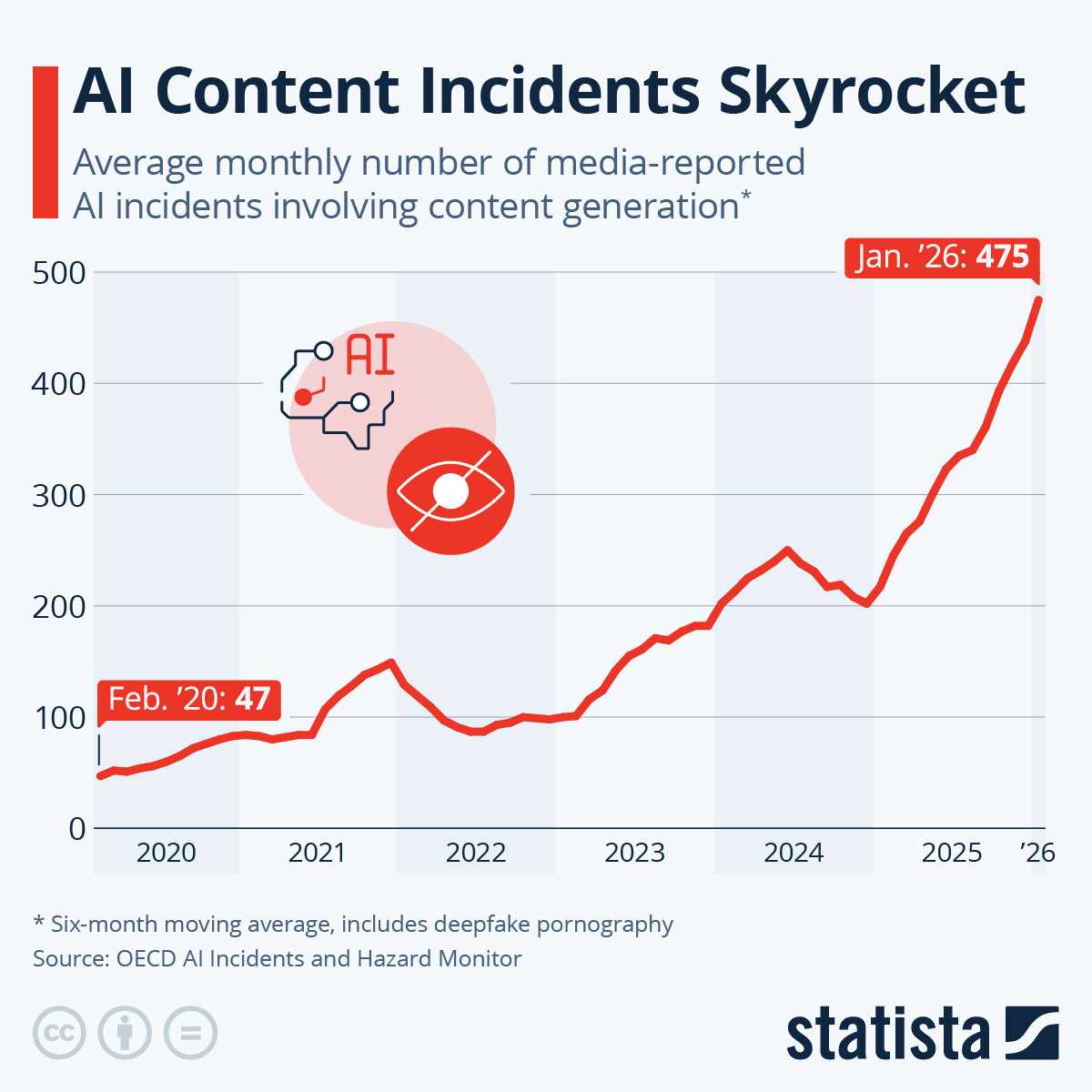

The commoditization of Generative Adversarial Networks (GANs) and Retrieval-based Voice Conversion (RVC) has rendered traditional video and audio identity verification obsolete, resulting in a 464% increase in successful synthetic breaches where existing corporate and regulatory defenses are measurably ineffective. As the Hong Kong incident demonstrates, we have entered an era where "seeing is believing" is no longer a security posture; it is a liability. This report will analyze the technical mechanics of the HK$200 million heist, the rapid escalation of synthetic media deployment, and why our current defensive frameworks are failing to account for the total erosion of digital trust.

1. The Script That Stole $25 Million

The Hong Kong heist remains the most sophisticated documented use of synthetic media in corporate fraud to date. According to the Hong Kong Police, the victim was initially targeted via a phishing email purportedly from the company’s UK-based CFO, discussing a "secret transaction." While the employee was initially skeptical, those doubts were surgically removed during the subsequent video call. The attackers understood that a single voice is a suspicion, but a room full of colleagues is a consensus.

The attackers utilized a multi-party synthetic simulation, meaning they didn't just clone the CFO; they cloned the entire meeting. The victim reported that everyone on the call looked and sounded like the colleagues he recognized. This was achieved by using publicly available footage of previous corporate meetings and feeding it through a real-time Deepfake pipeline. This technology replaces a person in an existing image or video with someone else's likeness using artificial neural networks. The simulation was so convincing that the employee spent over an hour interacting with ghosts.

The failure point here was not the employee's lack of skepticism. The failure was the assumption that a 90-minute live interaction provides a biological proof of life. It doesn't.

The social engineering was clinical. The "CFO" gave instructions, other "colleagues" chimed in with supporting details, and the employee followed the protocol for what appeared to be a high-stakes corporate directive. The transfer of HK$200 million across five accounts was logged as a standard operational procedure until the employee checked with the head office several days later. By then, the "cast" had logged off, and the funds were gone. According to CNN, the perpetrators used historical footage to recreate the likenesses, but the real-time interaction was likely managed through a script or a live actor whose features were mapped onto the synthetic masks.

2. The Velocity of the Synthetic Arms Race

The escalation from "funny face-swapping apps" to $25 million heists happened with a velocity that caught both the public and regulators off guard. This was not a sudden leap, but a steady erosion of the barrier between simulation and reality. Earlier precedents showed the trajectory of this threat. As reported by Forbes, a bank manager in the United Arab Emirates was tricked into transferring $35 million in 2020 after hearing the cloned voice of a company director.

The timeline of deployment shows a clear move toward geopolitical and financial sabotage.

- 2020: The advocacy group RepresentUs released a PSA featuring deepfakes of Kim Jong Un and Vladimir Putin warning about the fragility of democracy.

- 2022: A low-quality deepfake of President Volodymyr Zelenskyy appeared on social media, purportedly showing him telling Ukrainian troops to surrender, as widely debunked by technical analysts.

- 2024: In January, a robocall featuring a cloned voice of President Joe Biden was sent to thousands of New Hampshire voters, telling them to "save your vote" and skip the primary, as documented by NBC News.

This timeline is backed by raw data. A 2024 report by Home Security Heroes found that the volume of deepfake content online has increased by 464% since 2022. While 98% of deepfake videos are non-consensual pornography—a devastating statistic that illustrates the primary use case for this technology—the remaining 2% represents a growing front of financial warfare. According to McAfee, one in four people surveyed has already experienced an AI voice scam or knows someone who has.

3. The Mechanics of the GAN/RVC Pipeline

The technical leap that enabled the Hong Kong heist was the commoditization of the GAN/RVC pipeline. What was once the domain of state actors is now available as a download on GitHub. Generative Adversarial Networks (GANs) are the realism engines of the deepfake world. A GAN consists of two neural networks: a generator that creates the image and a discriminator that tries to guess if the image is fake. They "compete" against each other until the generator can produce an image so realistic that the discriminator cannot tell the difference.

Complementing the visual is RVC (Retrieval-based Voice Conversion). This is a deep learning technique used to clone or convert voices with high fidelity using target voice samples. In 2020, cloning a voice required hours of clean audio data. By 2024, RVC models could achieve near-perfect mimicry with as little as 10 to 30 seconds of source material. This material is provided willingly by every executive in every podcast appearance, keynote, or earnings call they participate in.

The "cost to produce" barrier has collapsed entirely. What once required a Hollywood VFX studio now runs on a consumer laptop with an NVIDIA RTX GPU. Furthermore, the failure of digital watermarking to catch up with distribution means that once a synthetic file is generated, there is no reliable, platform-agnostic way to flag it as "AI-generated" before it reaches a human victim. OpenAI reported blocking over 250,000 attempts to generate deepfake images of major US political candidates in a single month, but their blocks only apply to their specific tools. Open-source models have no such guardrails and are increasingly used for high-stakes fraud.

4. The Liar's Dividend and the Death of Proof

The most insidious byproduct of the deepfake era isn't the theft of HK$200 million—it’s the Liar's Dividend. This is the benefit that accrues to liars from the public's awareness of deepfakes, allowing them to claim that real evidence is fake. When everything can be faked, nothing is definitively real. This creates a "post-truth" environment where corporate liability becomes an insurance nightmare. If a CEO is recorded making a controversial comment, they can simply claim it was an RVC-based clone.

We saw the power of this technology to reach mass audiences in January 2024 when non-consensual explicit deepfake images of Taylor Swift garnered over 47 million views on X in less than 17 hours, according to NBC News. The incident was so pervasive that it forced major platforms to temporarily block searches for the artist's name, as reported by The Guardian. This wasn't just a failure of content moderation; it was a proof of concept for how quickly synthetic media can overwhelm institutional defenses.

Corporate insurance models are currently failing to account for this. Most "social engineering" riders were written for simple email phishing, not 90-minute real-time simulations. If a CFO authorizes a wire transfer, they can now plausibly claim their likeness was hijacked by a GAN. This creates a legal vacuum where the burden of proof shifted from "is this real?" to "can you prove this isn't fake?" Reuters reports that financial institutions are struggling to update their "Know Your Customer" (KYC) protocols to detect synthetic identities created for the sole purpose of money laundering.

5. The Myth of the Educated Skeptic

Defenders of the current status quo argue that public skepticism is increasing, making deepfakes less effective as users learn to distrust digital media. A report from the Brookings Institution suggests that as the public becomes more aware of AI’s capabilities, the "novelty" of deepfakes will diminish, leading to a more critical consumption of media. This argument posits that we are in a temporary "window of vulnerability" that will close as digital literacy catches up to the technology.

However, the evidence from the Hong Kong heist suggests this view is overly optimistic. Skepticism is a cognitive process; it requires time and attention. In a high-pressure 90-minute business call, the brain’s cognitive filters are naturally lowered to facilitate collaboration. The technical realism of current RVC models captures the specific cadence, stutter, and regional accent of a target. This effectively bypasses the "uncanny valley" that once signaled a fake to a human observer.

As noted in Wired, while there are legitimate creative uses for this tech, the malicious applications are inherently more scalable. You cannot "train" a junior accountant to be more skeptical than a GAN is realistic. The asymmetry of the threat is the core issue. An attacker only needs to be convincing once for 90 minutes; the employee must be a forensics expert every minute of their working life. Expecting human intuition to solve a problem created by neural networks is like asking a librarian to stop a cyberattack with a card catalog.

6. The Post-Biometric Security Mandate

The Hong Kong heist proves that traditional Two-Factor Authentication (2FA) and video verification are insufficient. If your "second factor" is a video call with a person you trust, and that person is a synthetic simulation, the security loop is broken. The regulatory response has been largely reactive. The FCC officially banned AI-generated voices in robocalls in February 2024, but this only addresses one specific delivery vector. It does not stop a scammer from using a cloned voice in a private WhatsApp call or a Zoom meeting.

We are moving toward a necessary future of cryptographically signed identity.

- Out-of-band verification: High-stakes transfers must be authorized through a separate, non-digital channel.

- C2PA Metadata: Implementation of hardware-level digital signatures on cameras and microphones to prove provenance.

- Biometric-independent trust: Moving away from "face and voice" as proof of identity and toward private keys held in hardware enclaves.

The industry is beginning to recognize that biometrics are no longer "secret" or "unique" if they can be scraped from a LinkedIn profile. Relying on a person's face to verify their identity is now as secure as using their name as a password.

If your corporate security policy still allows for the transfer of millions of dollars based on a video call alone, you have already been breached. You just don't know it yet.

7. Verdict: The End of Digital Trust

The evidence presented—from the HK$200 million heist to the 464% increase in synthetic media volume—supports the thesis that traditional identity verification is now obsolete. The commoditization of GANs and RVC has created a permanent asymmetry between the attacker and the defender. The attacker only needs 30 seconds of your voice to bypass a $25 million defensive wall; the defender needs to be right 100% of the time in every "live" interaction.

The failure is not in the "detection" of AI. Detection will always be a losing game of cat-and-mouse where the mouse has an infinite budget and the cat is a distracted human. The failure is the continued reliance on legacy human senses for high-stakes authorization. The Hong Kong employee didn't fail his company; the company's reliance on the "visual presence" of its CFO failed him. We have spent decades building a digital world based on the assumption that seeing a person means they are there.

We are entering a period where digital trust must be rebuilt from the ground up through cryptographic signatures rather than human recognition. Until that infrastructure is universal, the "Liar's Dividend" will continue to pay out, and the next HK$200 million is already being scheduled for a Monday morning calendar invite. The simulation is now indistinguishable from the reality it seeks to replace, and our skepticism is no match for the math. The medium of video has been broken, and it is time we stopped pretending we can fix it with a better pair of glasses.