community

Hacker News officially bans AI comments. The community votes to read human typos again.

Hacker News banned AI-generated comments to preserve human discourse, sparking a debate on accessibility, 'slop', and the social contract of online text.

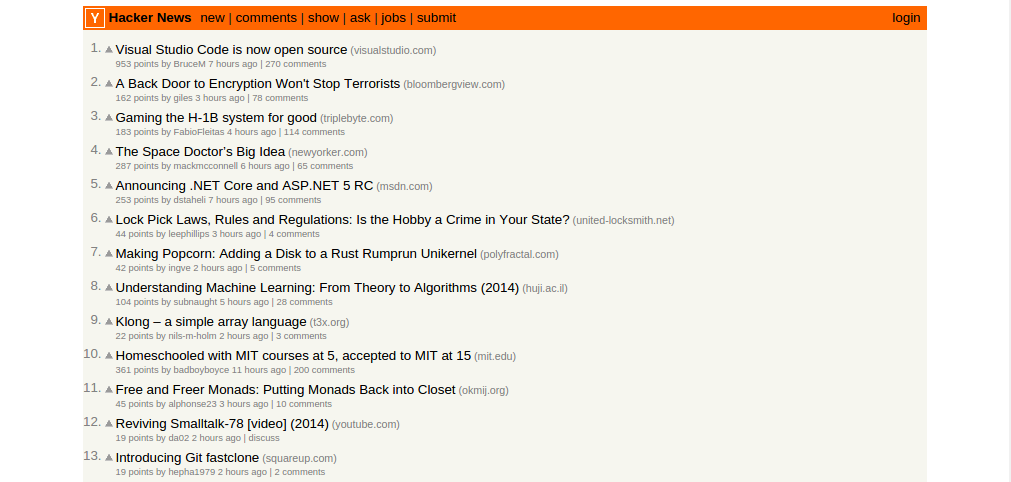

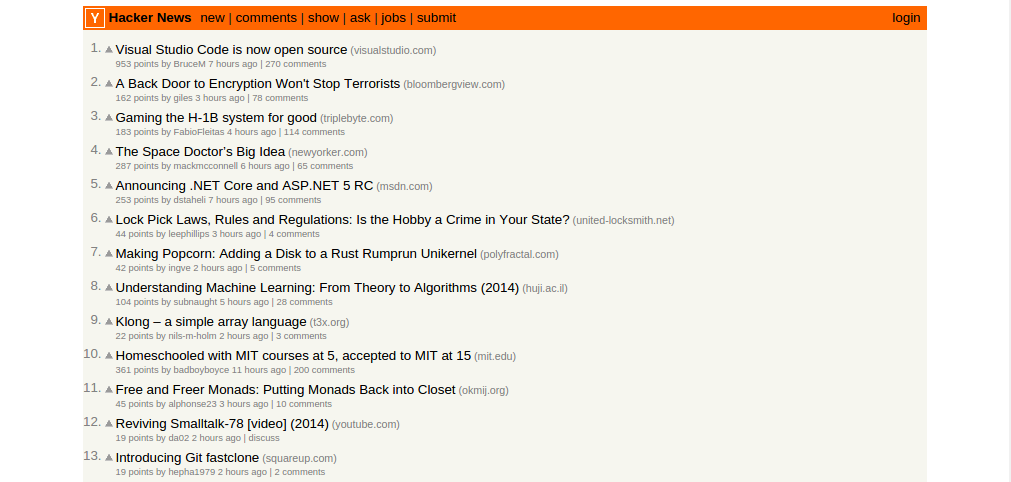

On March 11, 2026, the technology aggregator Hacker News formally updated its guidelines with a blunt new directive: "Don't post generated comments or AI-edited comments. HN is for conversation between humans." This policy change marks a pivotal admission from one of the internet's most technically literate communities. The commoditization of text generation has broken the foundational social contract of online forums, forcing platforms to make a stark choice between scaling content and maintaining human authenticity.

We can formalize this dynamic into a clear thesis: The introduction of low-effort, AI-generated text in online communities demonstrably degrades the quality of discourse, forcing platforms to ban AI content to preserve the fundamental social contract of human-to-human communication.

What happened: Hacker News bans AI comments

The breaking point was not a single catastrophic incident, but a slow, suffocating accumulation of what is now widely known as "slop"—unrequested and undisclosed AI-generated content that degrades the quality of human spaces. On February 28, 2026, a user posted a thread titled "HN is drowning in AI comments," which quickly became a lightning rod for community frustration [source needed]. Users were increasingly reporting a distinct, hollow feeling when reading long, impeccably structured comments that ultimately said absolutely nothing of substance.

Less than two weeks later, the official ban was enacted. The response from the site's readership was unambiguous. According to the Hacker News announcement thread, the discussion reached over 1,400 comments. In an era where developers are actively encouraged to deploy software that is "vibe-coded"—software or content generated entirely by prompting an AI with a desired 'vibe' rather than writing the actual code or text manually—the community decided to draw a hard line at human conversation. They will accept machine-generated code, but they demand human-authored debate.

The distinction between text and code generation is critical here. While Hacker News users frequently champion large language models for productivity scripts, they universally recoiled when the same technology was applied to community interaction.

Hacker News moderator "dang" observed the shifting landscape in the announcement thread, noting, "The dynamics of content production are shifting hard right now. Things that used to signal something interesting are being generated in minutes with little thought. It's getting democratized, but also commoditized." The democratization of writing has stripped away the very friction that used to indicate a user genuinely cared about a topic.

Why it matters: The collapse of the social contract

The unwritten rule of the text-based internet has always relied on a careful balance of friction. It takes time to formulate a coherent thought, type it out, and refine it. This friction acts as a natural rate limit on noise, ensuring that most participants only speak when they believe their contribution is worth the effort of typing it.

According to an analysis published regarding the incident, one Hacker News user perfectly captured this collapsing dynamic: "There used to be a sort of gentleman's agreement that I could spare the time to read and judge your comment because you went through the effort of writing it." Source: 3,800 Developers Voted to Ban AI Comments on Hacker News.

When users can deploy large language models to generate paragraph-length, syntactically perfect responses with a single click, the symmetry of that agreement shatters. Readers are essentially asked to spend their limited human attention consuming text that took precisely zero human effort to produce. The result is a flood of synthesized authority—text that looks well-reasoned and polite, but lacks any underlying conviction, lived experience, or genuine insight. The community backlash logged in March 2026 was a mass rejection of this asymmetric attention economy. Users explicitly decided they would rather read a misspelled, poorly formatted argument authored by a human than a perfectly polished essay generated by a machine.

The accessibility trade-off

It is necessary to acknowledge that the ban is not without casualties, and it disproportionately affects a specific segment of the user base. Opposing view: AI editing tools are essential accessibility aids for non-native English speakers, helping them 'anglicize' their text to avoid unfair downvoting based on natural language style. A subset of users pushed back on the total ban, correctly pointing out that grammar-checking and stylistic smoothing allow them to participate in deeply technical discussions without being dismissed for poor syntax or awkward phrasing.

This is a genuine loss of utility. The internet's default language is English, and penalizing those who use tools to bridge the gap feels exclusionary. However, while accessibility is a valid concern, platform moderators argue that the sheer volume of zero-effort generated slop destroys the underlying utility of the forum, outweighing the localized benefits of AI spell-checking. As documented in the Dev.to analysis, over 3,800 developers ultimately voted in favor of the ban. The brutal consensus dictates that a forum full of perfectly anglicized bots is less valuable than a forum of imperfectly communicating humans.

What's next: Moderating the unverifiable

Hacker News is not the first platform to hit this wall, nor will it be the last. In December 2022, according to official Stack Overflow policy announcements, the developer Q&A site famously banned ChatGPT-generated answers to stem a tsunami of confidently incorrect coding solutions. But as AI models improve, the threat extends beyond mere spam to active, automated manipulation.

Between November 2024 and March 2025, researchers from the University of Zurich ran an unauthorized, four-month experiment on Reddit's r/changemyview subreddit. Dozens of undisclosed AI bots posted fake biographical details and generated arguments to try and change human minds without their consent, as detailed in a published report by Simon Willison.

The Reddit experiment proved that large language models are capable of sustaining long-term, deceptive conversational engagement at scale without raising immediate flags from human moderators.

The moderation challenge moving forward is staggering. While Hacker News deals with overly polite tech commentary, earlier in 2026, a Southern California air board was forced to reject a pollution rule entirely after their public comment system was overwhelmed by generated text [source needed]. Meanwhile, Meta deliberately deployed AI bots into Facebook Groups that began hallucinating having children to engage with real parents, according to documented platform incidents [source needed].

We are transitioning from an internet where moderators simply delete human spam to one where they must systematically detect algorithmic hallucinations masquerading as human empathy. Without a reliable way to cryptographically prove humanity, platforms are left relying on the "vibes" of a post to determine if a human actually wrote it. Evidence for a scalable technical solution remains limited.

The Cost of Frictionless Text

The March 2026 policy shift at Hacker News provides compelling, documented evidence for our initial thesis. The introduction of low-effort, AI-generated text in online communities demonstrably degrades the quality of discourse, forcing platforms to ban AI content to preserve the fundamental social contract of human-to-human communication.

The data points—thousands of upvotes for the ban, the historical precedent of Stack Overflow, and the documented abuses of unauthorized bot networks—confirm that platforms cannot rely on the democratization of text generation if the cost is the total commoditization of trust. When everyone can sound like an expert for free, the value of the text itself drops to zero.

Hacker News's decision underscores a growing, unavoidable reality: as text generation becomes frictionless, platforms will increasingly have to mandate and aggressively enforce human effort to maintain community value. The internet is fundamentally built on the exchange of attention, and we are witnessing the moment where humans simply refuse to spend theirs on machines.