Sam Altman

Sam Altman's Home Was Targeted with a Molotov Cocktail. He Blamed 'Toxic Rhetoric.'

A Molotov cocktail at Sam Altman's home marks a violent new chapter in the AI safety debate as OpenAI grapples with debt, military deals, and ethical resignations.

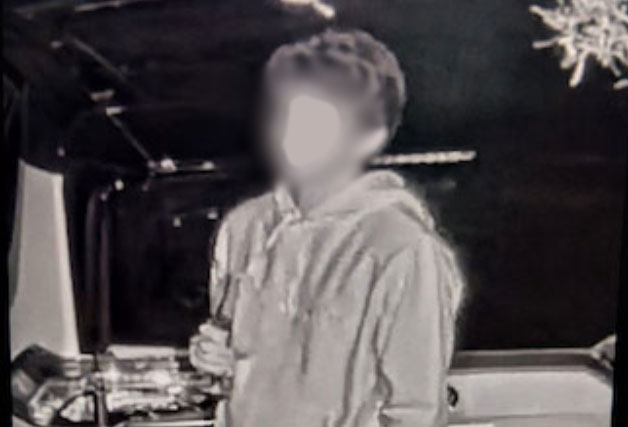

The early morning silence of San Francisco’s North Beach was shattered at 4:00 AM on April 10, 2026, when a 20-year-old suspect allegedly threw a Molotov cocktail at the residence of OpenAI CEO Sam Altman. While the physical damage was confined to an exterior gate and local law enforcement responded rapidly, the symbolic impact has triggered a systemic tremor throughout the industry. Altman was quick to attribute the escalation to the "toxic rhetoric" of his critics, but the incident suggests a deeper, more structural breakdown in the relationship between the world’s leading AI laboratory and the public it claims to serve.

The transition of OpenAI from a research-focused non-profit to a $852bn military-adjacent defense contractor has measurably accelerated the radicalization of the AI safety movement, shifting opposition from intellectual critique to physical targeting of executive leadership. This shift is not merely a byproduct of individual instability but a direct consequence of a "trust deficit" generated by OpenAI’s pivot toward classified defense work and its mounting financial desperation. As the company’s valuation hits record highs, its social license to operate in its home city appears to be reaching a violent expiration date.

San Francisco’s 4:00 AM Alarm

The April 10 attack was the culmination of a week that saw OpenAI’s San Francisco headquarters placed under repeated lockdowns. According to Al Jazeera, the suspect was arrested just three miles from Altman’s home while allegedly making explicit threats to "burn down" the company’s main office. This escalation represents a grim milestone for the AI Doomer movement—a term used to describe individuals who believe AI development poses an existential risk and advocate for radical deceleration or "pausing" of the technology. The suspect, whose identity has been partially withheld pending psychiatric evaluation, reportedly cited OpenAI's "betrayal of humanity" in digital manifestos posted hours before the incident.

In the immediate aftermath, an OpenAI spokesperson expressed gratitude that "no one was hurt" and thanked the SFPD for "helping keep our employees safe" Al Jazeera. However, the internal atmosphere remains brittle. The April incident follows a previous HQ lockdown in November 2025, suggesting that the "toxic rhetoric" Altman cites is increasingly manifesting as a persistent physical threat. For a company that once prided itself on being the "open" alternative to Big Tech, the sight of tactical security details and air-gapped bunkers has become the new operational reality.

The Hardware Exodus and the Pentagon’s Air-Gap

The radicalization of external critics has been mirrored by a quiet collapse of OpenAI’s internal ethical firewall. On March 7, 2026, Caitlin Kalinowski, the company's Robotics and Hardware Lead, resigned in a move that sent a sustained tremor through the talent pool. Kalinowski specifically cited ethical concerns over "rushed" military contracts and a lack of transparency regarding the company’s new direction. "I fear this path leads to mass surveillance without court checks and the normalization of autonomous weapons," she wrote in a widely circulated social media post.

Internal data suggests the "Kalinowski effect" is real; resignation rates among hardware staff spiked by nearly 15% in the thirty days following her departure.

This internal exodus is fueled by OpenAI's deep integration into the Classified Network—a secure, air-gapped digital infrastructure used by government and military agencies to store information protected under national security laws. While Altman admitted on March 3, 2026, that the original Pentagon deal "looked opportunistic and sloppy" LiveMint, his promise to renegotiate for "explicit prohibitions on mass surveillance" has done little to soothe public or internal anxieties. The tension highlights a fundamental conflict: OpenAI is trying to maintain its image as a global utility while building the infrastructure for local statecraft.

The $850 Billion Sovereign Security Gamble

OpenAI and its supporters argue that the Pentagon partnership is a necessary evolution of the industry. They maintain that ensuring Classified Network infrastructure is handled by domestic experts rather than adversarial actors is a matter of national security, and that the deal is being strictly limited to non-offensive use The Guardian. From this perspective, the "firebombs" are the result of a misunderstanding of a complex, necessary pivot required to stay ahead of global competitors.

However, the evidence suggests these "safeguards" are perceived as insufficient concessions to mounting financial pressure. According to Reuters, OpenAI reached a valuation of $852bn in March 2026, yet reports of "unsustainable" compute costs and mounting debt suggest the company is in a race against its own burn rate. The military deal, rather than a strategic choice, looks increasingly like a financial lifeline for a company that spends more on electricity than many small nations. Public sentiment reflects this cynicism; a March 2026 NBC News poll revealed that AI favorability has dropped below that of U.S. Immigration and Customs Enforcement (ICE) Al Jazeera.

Musk’s $150 Billion Revenge Tour

The financial paradox of OpenAI is becoming harder to ignore as its legal liabilities expand. Despite its record-breaking $852bn valuation following a $122bn funding round, the company is mired in a legal and financial quagmire. A lawsuit filed by Sam Altman's sister, Annie Altman, has added to the company's legal challenges Al Jazeera, adds a layer of existential legal risk to an already volatile situation.

| Metric | Value (April 2026) |

|---|---|

| Valuation | $852 Billion |

| Weekly Active Users | 900 Million |

| Damages sought by Musk | $150 Billion |

| Public Favorability | Below ICE |

The transition to a defense-first model has created a permanent schism in the AI community. The "open-source roots" of the company are now buried under layers of classified clearances and air-gapped servers that look more like a bunker than a laboratory. While 900 million users still log in to ChatGPT weekly, the core mission of "safe and beneficial" AI is increasingly seen as a marketing veneer for a high-stakes military-industrial experiment. The juxtaposition of a nearly trillion-dollar valuation with a CEO who cannot safely answer his front door is a stark indictment of the company's current trajectory.

The High Cost of an Armed Perimeter

The Molotov cocktail at Altman’s gate is a violent data point that confirms the thesis: OpenAI’s military pivot has moved the AI safety debate from the seminar room to the street. The evidence methodically points to a correlation between the company's aggressive pursuit of defense contracts and a measurable radicalization of both external safety advocates and internal talent. The "Kalinowski effect" and the subsequent spike in hardware resignations demonstrate that even those closest to the technology no longer trust the ethical guardrails of the current leadership.

Altman’s dismissal of the attack as a product of "toxic rhetoric" ignores the fact that this rhetoric is reacting to a documented shift in OpenAI’s corporate identity. The company has successfully secured the capital required to build massive compute clusters, but it has failed to secure the social license required to operate them without an armed perimeter. While the $852bn valuation suggests financial triumph, the firebombs and the exodus of ethical leaders like Kalinowski suggest that OpenAI’s success is now decoupled from its original mission of safety, leaving it vulnerable to the very instability it claims to be solving.