environment

Microsoft pledged to be carbon negative by 2030. Its AI build-out just raised emissions by 29%.

Microsoft and Google’s climate goals are crashing into AI hardware. A deep dive into how LLMs are drying up aquifers and bloating power grids for token generation.

For decades, Silicon Valley has successfully marketed itself as a clean industry—a weightless world of bits and glass that stood in contrast to the soot-stained smokestacks of the 20th century. This aesthetic of digital etherealness allowed tech giants to issue climate pledges promising a future where humanity could compute its way out of ecological collapse. However, the arrival of generative AI has unceremoniously punctured this bubble. The physical manifestation of artificial intelligence is not a shimmering digital consciousness but a regression toward resource-intensive industrialism.

The rapid expansion of generative AI infrastructure has created a measurable decoupling between tech industry revenue and sustainability targets. Since 2020, Microsoft’s total carbon emissions have increased by 29.1%, proving that current LLM scaling laws are fundamentally incompatible with their stated goal of carbon negativity by 2030. As Microsoft and Google aggressively build out the planetary-scale compute required to win the token arms race, their environmental ledgers are bleeding red. The narrative of the green tech industry is no longer being stretched; it is being shredded by the thermodynamic reality of the H100 GPU and the unyielding physics of evaporative cooling.

The Hydrology of the Hype Cycle

In the heart of Iowa, the city of Council Bluffs has become an unwitting laboratory for the secondary externalities of the AI boom. While the marketing for GPT-4 focused on its ability to pass professional exams, the physical reality of its training was far more terrestrial. During the critical windows of model development in 2023 and 2024, local water utilities reported a localized spike in consumption. The culprit was the massive Microsoft data center campus cooling the clusters required by OpenAI.

This surge highlights a metric that has suddenly become the industry’s most uncomfortable indicator: Water Usage Effectiveness (WUE). WUE is defined as the ratio of water used for cooling to the total IT energy consumption. In the drought-prone regions where many of these facilities are situated, a high WUE is a direct drain on local aquifers. According to the Microsoft 2024 Environmental Sustainability Report, the company's total water consumption remains a point of intense scrutiny as AI workloads demand aggressive thermal management.

The invisible link between a chat prompt and a gallon of water is increasingly documented. Every few dozen queries processed by a large language model essentially requires the evaporation of a half-liter of water for cooling, according to research from the University of California. In Council Bluffs, this translated to a struggle for local utilities to maintain pressure for residents while satisfying the thirst of the servers. As noted by the World Resources Institute, such spikes in resource demand often lead to rising utility costs for residents.

The scale of this thirst is not limited to a single county in Iowa. Global AI demand is projected to consume between 4.2 and 6.6 billion cubic meters of water by 2027, as detailed in Nature's analysis of AI water footprints. This volume exceeds the total annual water withdrawal of the entire nation of Denmark. The industry is currently swapping electricity for water to keep its Power Usage Effectiveness (PUE) numbers low, effectively hiding the environmental cost in utility bills that the public rarely sees.

Global AI demand is projected to consume between 4.2 and 6.6 billion cubic meters of water by 2027—a volume that exceeds the total annual water withdrawal of Denmark.

The Thermal Debt of Synthetic Intelligence

To understand why AI is more environmentally damaging than traditional cloud computing, one must look at the thermal density of the hardware. The transition from CPU-based workloads to GPU-based AI workloads is a generational step backward for energy efficiency. A standard server rack in a traditional data center might pull 5 to 10 kilowatts of power. A rack filled with NVIDIA H100 or Blackwell GPUs can pull upwards of 40 to 100 kilowatts, as shown in the NVIDIA H100 technical datasheet.

The physics of this intelligence are brutal: as you pack more transistors into a smaller space to minimize latency, the heat generated per square foot skyrockets. This has forced data center operators into a difficult choice regarding cooling methods. Most efficient data centers choose evaporative cooling to maintain low energy stats, despite the massive water cost. This choice is often framed as a green initiative because it reduces electricity use, but it ignores the local ecological impact.

This thermal density makes the bigger-is-better model of LLM training a threat to regional climate stability. As models grow from billions to trillions of parameters, the cooling requirements scale non-linearly. The IEA Electricity 2024 report notes that data centers already consume 2% of global electricity, a figure expected to double by 2026. The hardware cooling frameworks are currently unable to keep pace with the thermal output of the newest silicon designs.

The Stargate Horizon and the Return of the Atom

The scale of planned infrastructure suggests the industry has no intention of slowing down. Reports of the Stargate project, a proposed $100 billion supercomputer, indicate a scale of construction that would dwarf any existing facility. To power such ambitions, tech giants are now turning toward nuclear energy. Microsoft recently signed a deal with Constellation Energy to restart Three Mile Island, specifically to fuel its AI operations.

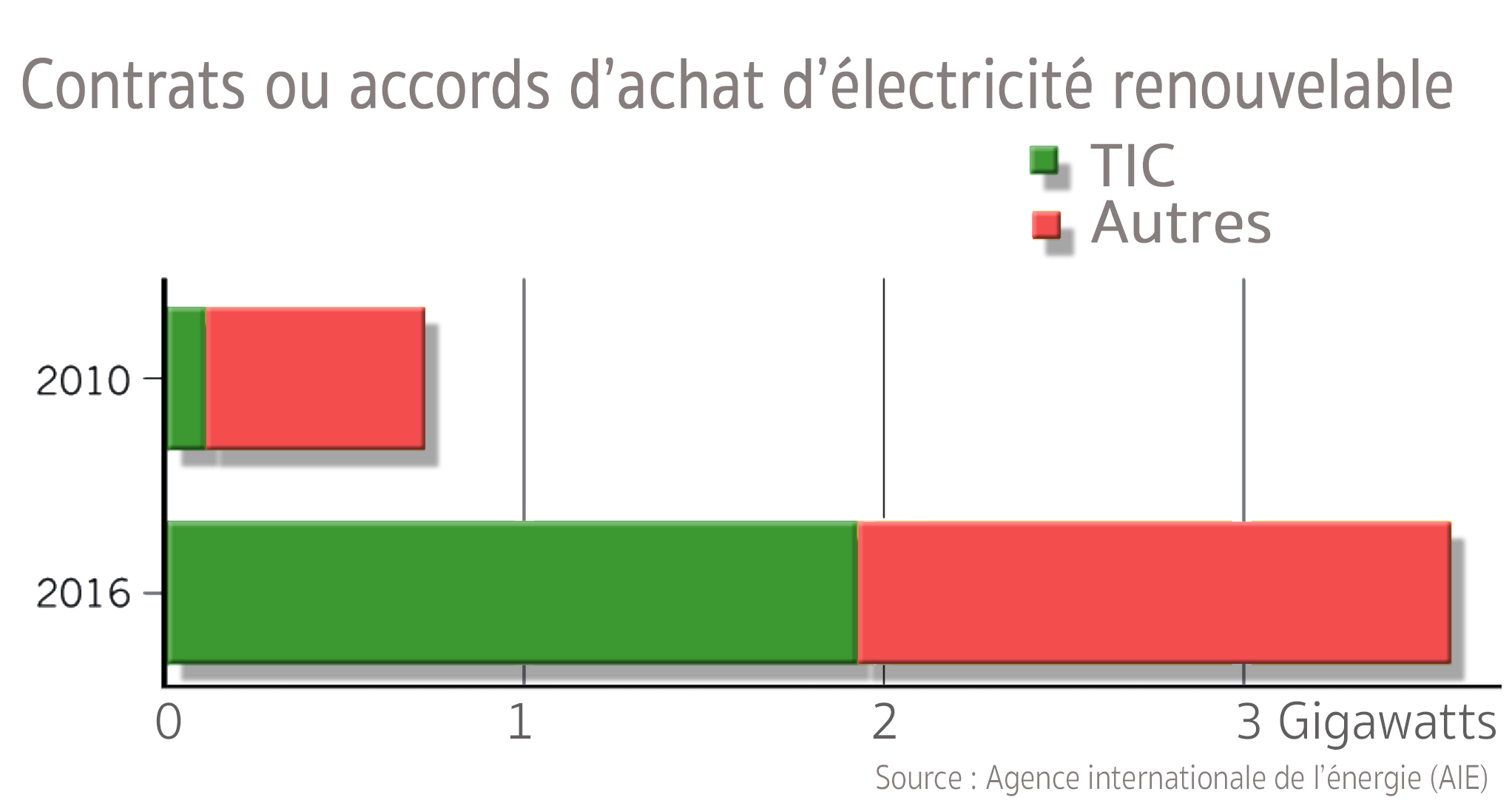

This shift toward dedicated nuclear power reveals the limitations of the existing renewable grid. While nuclear is carbon-free, the construction of these dedicated power sources takes years, if not decades. In the interim, the industry continues to rely on the standard grid, which remains heavily dependent on natural gas and coal in many data center hubs. The surge in demand is currently outpacing the rollout of new renewable capacity in almost every major tech corridor.

| Metric | 2023 Baseline | 2030 Projection |

|---|---|---|

| Global AI Energy Demand | ~50 TWh | ~554 TWh |

| Microsoft Emissions Growth | 29.1% (since 2020) | TBD |

| Google Water Consumption | 6.1 Billion Gallons | TBD |

The Goldman Sachs Intelligence report estimates that AI will drive a 160% increase in data center power demand by the end of the decade. This parallel energy grid is being built faster than most nations can modernize their transmission lines. This creates a geographic conflict where tech hubs compete with local populations for stable energy access.

The Scope 3 Mirage: Concrete, Steel, and Silicon

The most significant revelation in recent sustainability filings is the failure to manage Scope 3 Emissions. These are indirect emissions that occur in the value chain of the reporting company, including construction and manufacturing. For Microsoft, this means the carbon footprint of the concrete, steel, and microchips required for new data centers. You cannot have the cloud without a massive physical building.

The manufacturing of the chips themselves is an intensive process that adds to the environmental debt. TSMC, which produces the majority of high-end AI chips, uses massive quantities of water and energy in its fabrication plants. As noted in the TSMC Sustainability Report, the production of a single wafer requires thousands of gallons of ultra-pure water. This upstream cost is often omitted from the carbon footprint calculations presented to AI end-users.

Furthermore, the construction industry is one of the largest contributors to global CO2. High-carbon concrete used for data center floors is a primary driver of the emissions surge reported by Microsoft. According to IEA cement industry data, the chemical process of creating cement is inherently carbon-intensive. Tech companies often claim to be 100% renewable by purchasing Renewable Energy Credits (RECs), but these are fiscal accounting tools that do not offset the physical carbon dumped into the atmosphere during construction.

Jevons Paradox in the Age of Tokens

The industry often defends its growth by pointing to the increasing efficiency of its hardware. However, this argument ignores the Jevons Paradox, an economic phenomenon where increased efficiency leads to higher overall consumption. Because NVIDIA makes chips that are more efficient at processing tokens, tech companies don't use less energy; they simply run more tokens. The efficiency gains are immediately eaten by the expansion of the market.

This paradox is visible in the way LLMs are deployed. Instead of using smaller, specialized models, the industry has pushed for massive general-purpose models that are used for trivial tasks. Every time an AI is used to summarize an email that could have been read in ten seconds, the environmental cost of that hardware is amortized over a low-value output. The scale of usage is growing far faster than the efficiency of the chips can compensate for.

As Greenpeace Germany reports, the electricity demand for AI is rising faster than renewable capacity can be built. The shift from 50 TWh to 554 TWh by 2030 suggests the industry is effectively building a parallel energy grid. Unless the industry moves away from the bigger-is-better model of training, these sustainability pledges will remain unachievable.

The Grid Under Pressure: A Geographic Conflict

The impact of this expansion is most acute in data center hubs like Northern Virginia and Ireland. In Ireland, data centers now consume 21% of the nation's total electricity, according to The Guardian. This has led to moratoriums on new connections as the grid reaches its breaking point. The physical limits of the electrical grid are becoming the ultimate regulator of AI growth.

In the United States, the growth in Loudoun County has forced utilities to reconsider the retirement of coal-fired power plants. The Washington Post reports that the sudden surge in demand is making it difficult for regional grids to transition away from fossil fuels. The AI boom is effectively subsidizing the continued operation of older, dirtier power plants that were scheduled for decommission.

This creates a tension between corporate climate goals and regional reality. While a company might buy wind power in a different state to offset its usage, the local data center is still pulling electrons from a grid powered by gas. This geographic mismatch is a central component of the industry's green narrative. It allows companies to claim a net-zero status while the physical sites of their operations continue to emit.

The Industry Response: Optimization as Salvation

In the face of these receipts, tech giants have pivoted to a defensive narrative: AI is not the problem, but the solution. Microsoft and Google argue that AI is an essential tool for accelerating the transition to clean energy. They point to research suggesting that AI-driven grid management can boost renewable output by optimizing intermittent power sources.

"Our carbon negative commitment includes three primary areas: reducing carbon emissions; increasing use of carbon-free electricity; and carbon removal," a Microsoft official statement asserts. Supporters of this view argue that the efficiencies gained in battery chemistry and material science will eventually offset the initial carbon debt of the hardware. Indeed, some case studies cited by Shaolei Ren show that AI can help balance smart grids.

However, this defense suffers from a significant timeline problem. The environmental costs of AI are immediate and massive, while the benefits remain theoretical and marginal. While AI may offer grid gains of up to 20%, these are currently being outpaced by the projected 1000% increase in data center demand. To argue that we must burn more carbon today to build the tool that might save us tomorrow is a logic that assumes the interest rates on the climate debt are negligible.

The Analytical Verdict on the Decoupling

The evidence presented in the latest sustainability filings supports the thesis that a fundamental decoupling has occurred. Microsoft’s revenue is growing and its AI dominance is expanding, but its sustainability targets are receding. The goal of carbon negativity by 2030 is no longer a serious technical target; it is a legacy promise that has been superseded by the corporate priority of LLM supremacy.

The 29.1% increase in Microsoft's emissions is not a fluke; it is the first clear signal that the AI era is incompatible with the climate goals of the previous decade. The physics of the H100 and the thirst of the Council Bluffs aquifers are indifferent to corporate mission statements. We are currently witnessing a massive transfer of environmental resources into the development of automated chat systems.

Ultimately, the receipts are logged and the water is running out. Unless the industry finding a way to circumvent the Jevons Paradox, their sustainability pledges will remain unachievable. Whether the trade of planetary resources for synthetic intelligence is worth it is a question the tech giants seem determined to answer only after the concrete has set. For now, the emissions continue to rise, and the climate cost of the cloud is finally coming into focus.