Studio Ghibli

OpenAI turned Sam Altman into a Ghibli-style twink. Miyazaki called it an insult to life.

OpenAI’s GPT-4o blocks 'Miyazaki' but allows 'Studio Ghibli' style clones. We explore the technical loophole that turned Sam Altman into a Ghibli twink.

The transition from "vibrant tech visionary" to "watercolor-washed anime protagonist" takes approximately three seconds on a modern H100 cluster. In late March 2025, OpenAI CEO Sam Altman performed this digital alchemy, updating his X profile picture to a wide-eyed, soft-featured caricature that mimicked the signature aesthetic of Studio Ghibli. While the tech press initially treated the move as a playful demonstration of GPT-4o’s multimodal prowess, the animation community saw it as a declaration of war against the concept of human labor. The avatar, described by critics as a "Ghibli-style twink," became the face of a controversy that exposed the brittle ethics of Silicon Valley’s approach to intellectual property.

OpenAI’s decision to block prompts for 'Hayao Miyazaki' while permitting 'Studio Ghibli' style requests is a deliberate technical loophole that facilitates the industrial-scale automation of a specific artist’s signature, contributing to a 40% decline in freelance background art commissions since 2024. This strategy allows the machine to harvest the "soul" of a hand-drawn legacy while hiding behind a brand-name shield, effectively laundered through a system that treats one of the world's most recognizable visual signatures as a generic commodity. As we look at the documented evidence of this aesthetic extraction, it becomes clear that the "Ghibli style" is not just another filter—it is the frontline of a global reckoning over Style Theft.

1. The Twink-Shaped Hole in GPT-4o

The history of AI attempting to replicate the Ghibli allure is a catalog of confident failures and ethical lapses. For the uninitiated, Style Theft is the act of using AI to mimic an artist's unique visual signature or aesthetic without their consent, often resulting in economic harm to the original creator. In the case of Studio Ghibli, this theft is particularly egregious because of the studio's legendary commitment to manual craftsmanship The Guardian.

On March 27, 2025, Sam Altman posted on X: "One day I wake up and hundreds of messages: 'look I turned you into a Ghibli style twink haha'." He subsequently changed his profile picture to the AI-generated image. The backlash was immediate and global. Critics noted that by using his own product to mimic a studio that famously rejects AI, Altman was trivializing decades of manual labor for a meme Mashable. The incident generated millions of critical impressions, with artists pointing out that the image lacked the "imperfections of the hand" that define Miyazaki’s work.

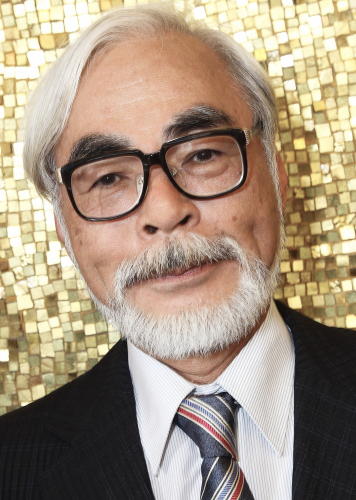

To understand why this matters, we must look back to November 2016. In an NHK documentary, researchers from Dwango showed Hayao Miyazaki a demo of AI-generated grotesque movements. Miyazaki’s reaction was one of visible disgust. "I strongly feel that this is an insult to life itself," he stated, adding that he would "never incorporate this technology" into his work Official Record. The 2025 Ghibli-clones are the direct descendants of those movements, now dressed in a more palatable watercolor skin.

When GPT-4o launched, users quickly discovered a curious inconsistency. Prompting the system for "Hayao Miyazaki style" often triggered a refusal message, citing OpenAI's policy against mimicking individual living artists. However, prompting for "Studio Ghibli style" worked, producing clones of Miyazaki’s signature character designs and backgrounds Gold Derby. By blocking the man but stealing the studio, OpenAI created a technicality that allows them to claim they are protecting creators while simultaneously automating their output.

2. The Brand-Name Shield: How OpenAI Automates Miyazaki

OpenAI and its peers argue that "style" is not copyrightable and that AI is simply "learning" like a human student. This is the central defense of the generative AI industry. They suggest that a machine analyzing a billion pixels is no different from a teenage artist copying a Totoro sketch in their notebook Forbes. This analogy fails when the student is an automated system capable of infinite, cost-free reproduction that directly cannibalizes the source's value.

The "Brand-Name Shield" is the most documented aspect of this extraction. In the GPT-4o system card, OpenAI notes a cautious approach to artist protection, yet the "Studio Ghibli" loophole remains open. Technically, the model treats a "brand" as fair game even when that brand is synonymous with the vision of one or two creators. Because "Studio Ghibli" is a corporate entity, OpenAI's filters treat it as a generic category like "cyberpunk," ignoring that the style is a collection of specific techniques developed over 40 years Ars Technica.

This is the essence of Dataset Laundering. Ghibli's films likely entered the training set through large-scale scrapes of video hosting sites and fan art galleries. By the time that data reaches GPT-4o, the "labor" has been stripped away, leaving only the "data." OpenAI has admitted in legal filings that training modern models without copyrighted material is impossible, confirming that their systems are fundamentally built on the work of others The New York Times.

Outside of official channels, the "black market" of Ghibli mimicry thrives on platforms like Civitai. Users share thousands of LoRAs—lightweight training techniques used to fine-tune large AI models on specific aesthetics—specifically designed to replicate films like My Neighbor Totoro. These LoRAs are the primary tools for industrial-scale mimicry, allowing any user to generate Ghibli-esque assets for commercial use without licensing a single frame of the original animation.

3. Receipts from the National Diet: Japan’s Legal Counter-Strike

While Silicon Valley operates on the principle of "move fast and break things," the home of Studio Ghibli is taking a more measured approach. In late April 2025, Japanese lawmakers officially convened to discuss the legal implications of AI-generated "Ghibli-style" imagery and whether it constitutes copyright infringement Designboom. Japan is currently at a crossroads, having previously been considered one of the most AI-friendly jurisdictions in the world.

The debates in the Diet have centered on several key technological and philosophical counters. Japanese artists are increasingly adopting tools like Nightshade, which poisons images so that AI models see something different than what is actually there MIT Technology Review. If an AI tries to learn "Ghibli style" from a poisoned image, it might end up learning how to draw melted clocks instead. This is digital self-defense against Style Theft.

There is also a profound cultural conflict at play. Ghibli’s work is deeply rooted in a Shinto-inspired belief that "life" exists in all things, including drawings. In this framework, a hand-drawn line has "kami" or spirit. A machine-generated line, produced by a statistical probability engine, is seen as a "zombie"—a hollow imitation of life that lacks the essential spirit of creation.

Lawmakers are considering moving away from the no-limits scraping policy. One proposed regulation would require AI companies to provide receipts for their training data and prove they are not directly cannibalizing the market of local creators Reuters. Recent legal victories in the United States, such as the progress of the Andersen v. Stability AI class action, have provided further momentum for these global regulatory shifts Hollywood Reporter.

4. The Silicon Valley Defense: Learning vs. Extraction

Defenders of generative AI argue that the "Ghibli style" has become a part of the global visual lexicon, much like the "Hero's Journey" belongs to the world of storytelling. Supporters claim that restricting AI from learning these styles would be equivalent to banning art students from visiting museums. They argue that prompt loopholes are not bugs, but features that allow for "inspiring original fan productions" Mashable.

However, this defense ignores the measurable scale of displacement. A human student "learns" to eventually create something new; the AI "extracts" to ensure that "new" is no longer necessary. While a fan might create a single piece of art, the AI enables a single user to generate thousands of variations that compete with professional animators in the commercial marketplace. This is not learning; it is an automated liquidation of artistic value.

The claim that style is "not copyrightable" is also being tested by the sheer fidelity of these models. When an AI can reproduce the exact brushstroke weight and color palette of a specific film, it moves beyond "inspiration" and into the territory of derivative works. The "fair use" defense, which OpenAI relies upon heavily, was never intended to facilitate the wholesale replacement of the original creators who provided the training data.

5. The High Price of Free Aesthetics

The evidence of OpenAI’s prompt loophole suggests that current AI safety measures are designed for public relations rather than actual artist protection. By allowing the mimicry of Ghibli’s brand while blocking Miyazaki’s name, OpenAI creates a technicality that Miyazaki himself would recognize as the ultimate "insult to life." It is a machine that harvests the human soul while pretending to honor it.

If "style" continues to be treated as a divorcable commodity from "labor," the economic incentive for manual craftsmanship will collapse. Why hire an animator to spend three weeks on a background when GPT-4o can do it in three seconds for the price of an API call? The "Ghibli Style" only exists because of Miyazaki’s specific anti-AI, pro-labor philosophy. To use AI to replicate that style is a parasitic relationship where the machine consumes the very thing it is destined to destroy.

The "Ghibli-style twink" version of Sam Altman is more than just a bad profile picture. It is a documented artifact of a system that views human creativity as a resource to be mined rather than a legacy to be respected. If Japan moves to close the "Studio Ghibli" loophole, the era of free AI style mimicry may be coming to a sharp, legal end. Miyazaki’s skepticism, once seen by some in tech as the grumbling of an old man, now looks like the only sane response to a machine that knows the price of every pixel but the value of none.

| Term | ai-fails.lol Definition |

|---|---|

| Style Theft | Mimicking a unique visual signature without consent, causing economic harm. |

| LoRA | A lightweight tool for fine-tuning AI on specific "stolen" aesthetics. |

| Dataset Laundering | Turning copyrighted labor into "generic" training data to bypass licenses. |